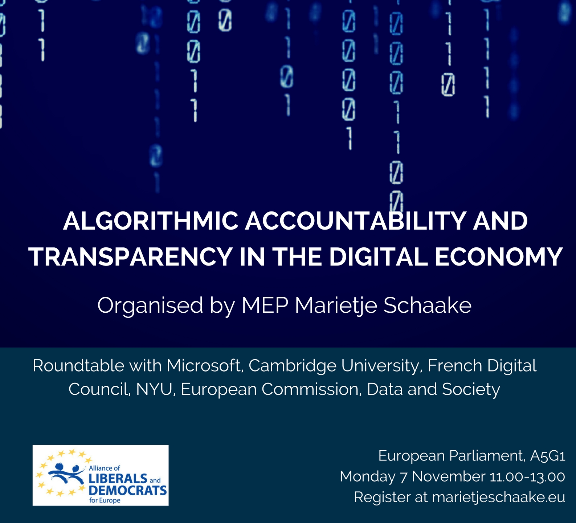

On November 7th I attended the Algorithms Transparency and Accountability in the Digital Economy roundtable event that was organized by MEP Marietje Schaake for the purpose of “discussing which options the European Union has to improve the accountability and/or the transparency of the algorithms that underpin many business models and platforms in the digital single market.”

On November 7th I attended the Algorithms Transparency and Accountability in the Digital Economy roundtable event that was organized by MEP Marietje Schaake for the purpose of “discussing which options the European Union has to improve the accountability and/or the transparency of the algorithms that underpin many business models and platforms in the digital single market.”

The speakers for the event were:

- Eric Horvitz (Microsoft [via Skype], co-chair of Partnership on AI)

- Yann Bonnet (French Digital Council)

- Ben Wagner (Council of Europe)

- Prabhat Agarwal (European Commission)

- Danah Boyd (Data and Society [pre-recorded video])

- Joris van Hoboken (IVIR)

- Julia Powles (University of Cambridge)

For anyone who is interested in the details, the full event is viewable here:

From the perspective of UnBias the highlights were are follow:

Eric Horvitz

The Partnership on AI collaboration between the large industry players (Google, Amazon, IBM, Microsoft …) wants to position itself as a consortium for open dialogue on the implications of AI and safeguarding that the technologies do no harm. They will therefore look for multi-stakeholder input including a broad range of non-industry and non-US groups. They recognize that transparency (explaining the steps to inference) is a fundamental challenge for AI and are making extra efforts to develop methods for bringing more clarity, including interactive machine learning where people engage with the learning system as it leans in order to understand why it is doing particular things. Sometimes however the more fundamental goals of detecting biases (including cultural bias) can be at odds with transparency. Transparency can enable manipulation of the system which could lead to a new form of cyberattack, the “machine learning manipulation attack”. When it comes to solutions it is important to avoid jumping to solutions that are based just on a freeze frame of the current state. They are, for instance, working on developing methods to make sure that protected constituencies are fairly dealt with. The FAT/ML community conference on November 18 in NYU had lots of papers on transparency and fairness. Lots of work coming which needs to be monitored and understood before making regulation that blocks innovation.

Yann Bonnet (French Digital Council)

The FDC is a public lobby for citizens, SMEs and startups who don’t have time/money to present their interests to policy makers. Mostly focused on concerns over large platforms (like Google) abusing economic power of ‘vertical integration’, e.g. decrease visibility of competitors on search engine. On the issue of fairness, the system should “do what is says, and say what is does”. If a platform provider wants to make changes to their system they should be required to give enough prior notice so that businesses and people can respond, adjust, before the changes happen. A call for a European agency to rate the behaviour of platforms on things like Terms & Conditions readability, actual behavious of manipulation of users etc. to clarify the choices for everyone,including investors looking for reputable companies to invest in).

Ben Wagner (Council of Europe)

The Committee of experts on Internet Intermediaries (MSI-NET) has set up a working group to investigate the impact of ‘platforms’ on democracy, e.g. content take downs vs. free speech; impact on elections of automates platforms that select the information that is displayed to users; bots that engage with users. they are also concerned with the blurring of boundaries between automated vs. semi-automated systems (e.g. automated system outputs that human ‘operators’ are asked to click ‘accept’ on). When it comes to regulation, size matters. Large platforms need to be regulated whereas smaller startups need space to experiment. Public sector also needs to make sure that the automated decision making systems used by them are compliant with their own demands for transparency/accountability. Regulation should focus on the influence and application of systems, not on research. There is a need for freedom of research in order to be able to develop better transparent systems.

Prabhat Agarwal (European Commission)

European Parliament has asked the EC to run a pilot project to do an in-depth study of this area, looking at policy implications. We need to consider what will make effective transparency. Making code open source does not necessarily make transparency, it is an issue of trust. Transparency is only a means for achieving trust. EC project will have workstream on trustworthiness. Important to look at new governance models, e.g. Nesta proposed “machine intelligence commission”. Data hoarding and consumption is an important element. Concept of ‘Information diet’ – the attitude to consumption of information needs to be more like the consumption of food – “don’t consume too much and not just of one type” – need to educate ourself on how we consume this information (filter bubble = French fries every day).

Danah Boyd (Data and Society [pre-recorded video])

There is a need to build healthy socio-technical systems. Algorithms systems create false hope that logical data-driven optimization will solve social problems. Accountability of algorithms is not serviced by simply putting code into open source. There are plenty of exampled (e.g. heartbleed) of vulnerability within open source code. Algorithm transparency doesn’t help without the data that is used (e.g. Facebook newsfeed). Personalised does not mean information is based on your personal data alone – it is in the context of other people’s data. Transparency not for transparency sake – we are aiming for accountability – not always via transparency. We need to consider how to define the terms/values we are really interested in. Computer scientists should not be asked to make final decisions on values – they need to be told which values to program into the code, what they are supposed to optimize for. There is also a big problem with algorithms are deployed, used in contexts they were not designed for. Who has power to adjust them? Increasing widespread use of algorithms makes it more clear that we don’t have a consistent/clear idea of what we as society actually want.

Joris van Hoboken (IVIR [institute of information law])

What are the values that go into search engine rankings? – dominant thing in the competition are the information needs for shopping – search results are optimised for users who are intending to buy things. Transparency / accountability – avoid superficial technical discussion – need to look at the deployment of these systems – what is making this possible – biggest companies in the world are teaming up – this is relevant to political economy – all of the large companies are US based, this is also relevant – context matters. Transparency in terms of input-output logic is not necessarily helpful as this will allow for manipulation which can be dangerous (empower spammers). What is important is transparency about the goals: What is being optimized? What are the values? What is the context? What do we actually mean by fairness? Dangerous that definitions of fairness that are deployed are not defined in the democratic process. Again, context matter, e.g. orgnization of labour markets is difference in different countries (France vs. US). Due process is also important. Excited to see creation of the Partnership on AI initiative, but worried about a lack of variety of representation. Initiative like this can have an agenda of aiming to prevent regulation of being adopted, how serious is the engagement with the ethical fairness policy issues? In Europe, we have agencies, competition authorities, data protection, etc that need to start looking at this. GDPR has some aspects for this but DPAs are completely underfunded to do the work that needs to be done.

Julia Powles (University of Cambridge)

Inflection point of where we are at in terms of AI and its promise – challenges and anxieties of society – current AI is primarily “statistics at scale with a slant”. There seems to be an across the board consensus that transparency and accountability is necessary – good that industry is engaging but they should not lead the discussion – needs to be accomplished by robust moves that technology is developed for society benefit. Data property rights are limiting aspect for system development – ownership of data issues are important. The internet policy process shows that decentralized multi-stakeholder can become dominated by industry – there is a need for inspectors. A lot of difference between putting code outside and – need to leverage people (like FAT ML) to work in regulatory context on industry sectors to stop corporate power consolidation. GDPR give the “right to explanation of automated decisions” but we need to work out what this actually means. Accountability is clearer than transparency (actually). The conjunction of AI with IoT is presenting a moment for a bold move to push for vendor liability – Europe as worlds privacy and safety regulator needs to push for vendor liability

One thought on “Algorithms Transparency and Accountability in the Digital Economy event at the European Parliament”