On October 25th we presented our Science Technology Options Assessment (STOA) report on “a governance framework for algorithmic accountability and transparency” to the Members of the European Parliament and the European Parliament Research Services “Panel for the Future of Science and Technology.

Category Archives: Parliamentary Inquiry

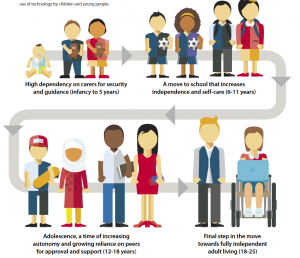

Age-Appropriate Design Code – call for evidence by the ICO

As of May 25th 2018 the Data Protection Act 2018 (DPA2018) has taken effect in the UK, supporting and supplementing the implementation of the EU General Data Protection Regulation (GDPR).

An important requirement in the DPA2018, going beyond the GDPR, is the inclusion of an Age Appropriate Design Code (section 123 of DPA2018) to provide guidance on the design standards that the Information Commissioner’s Office (ICO) will expect providers of online ‘Information Society Services’ (ISS), which are likely to be accessed by children, to meet.

The ICO is responsible for drafting the Code and has issued a call for evidence is the first stage of the consultation process.

Continue reading Age-Appropriate Design Code – call for evidence by the ICO

On 16th April the House of Select Committee on Artificial Intelligence published a report called ‘AI in the UK: ready, willing and able?”. The report is based on an inquiry by the House of Lords conducted to consider the economic, ethical and social implications of advances in artificial intelligence. UnBias team member Ansgar Koene submitted written evidence based on the combined work of the UnBias investigations and our involvement with the development of the IEEE P7003 Standard for Algorithmic Bias Considerations.

UnBias submissions to UK Parliamentary inquiries on “Fake News” and “Algorithms in decision-making”

Prior to the June 8th snap election there were two Commons Select Committee inquiries that both touched directly on our work at UnBias and for which we submitted written evidence. One on “Algorithms in decision-making” and another on “Fake News”.