On September 7th the Guardian published an article drawing attention to a study from Stanford University which had applied Deep Neural Networks (a form of machine learning AI) to test if they could distinguish peoples’ sexual orientation from facial images. After reading both the original study and the Guardian’s report about it, there were so many problematic aspects about the study that I immediately had to write a response, which was published in the Conversation on September 13th under the title “Machine gaydar: AI is reinforcing stereotypes that liberal societies are trying to get rid of“.

Continue reading In the Conversation: “Machine gaydar: AI is reinforcing stereotypes that liberal societies are trying to get rid of” →

In the current BBC series Secrets of Silicon Valley Jamie Bartlett (technology writer and Director of the Centre for Social Media Analysis at Demos) explores the ‘dark reality behind Silicon Valley’s glittering promise to build a better world.’ Episode 2, The Persuasion Machine, shines a spotlight on several of the issues we are investigating in UnBias.

Continue reading Algorithms and the persuasion machine →

On 21st March the House of Select Committee on Communications published a report called ‘Growing up with the internet’. The report is based on an enquiry conducted by the House of Lords into Children and the Internet. UnBias team member Professor Marina Jirotka served as a specialist advisor to the enquiry and team member Professor Derek McAuley gave verbal evidence to it, elaborating on the written evidence submitted by Perez, Koene and McAuley.

Continue reading “Growing up Digital” UnBias team members contribute to House of Lords report →

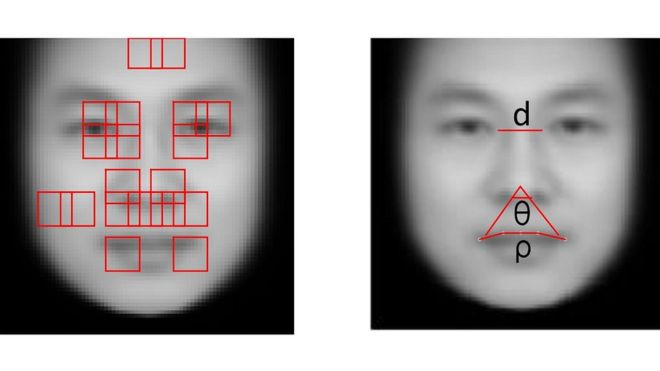

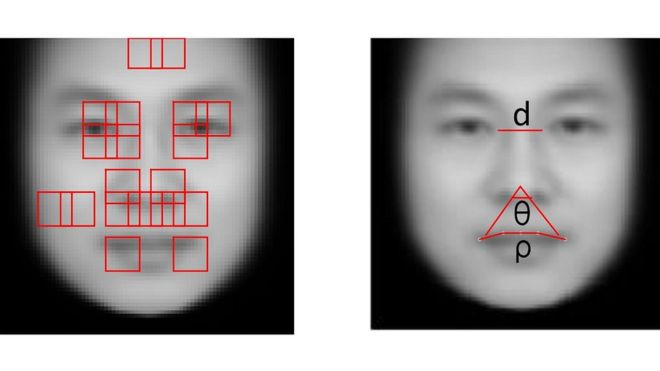

A recent report from the BBC covers one instance of the ever-growing use of algorithms for social purposes and helps us to illustrate some key ethical concerns underpinning the UnBias project.

A recent report from the BBC covers one instance of the ever-growing use of algorithms for social purposes and helps us to illustrate some key ethical concerns underpinning the UnBias project.

Continue reading The need for a responsible approach to the development of algorithms →

In an almost suspiciously conspiracy-like fashion the official launch of UnBias at the start of September was immediately accompanied by a series of news articles providing examples of problems with algorithms that are making recommendations or controlling the flow of information. Cases like the unintentional racial bias in a machine learning based beauty contest algorithm, meant to remove bias of human judges; a series of embarrassing news recommendations on the Facebook trending topics feed, as a results of an attempt to avoid (appearance of) bias by getting rid of human editors; and controversy about Facebook’s automated editorial decision to remove the Pulitzer prize-winning “napalm girl” photograph because the image was identifies as containing nudity. My view of these events? “Facebook’s algorithms give it more editorial responsibility – not less“ (published today in the Conversation).

In an almost suspiciously conspiracy-like fashion the official launch of UnBias at the start of September was immediately accompanied by a series of news articles providing examples of problems with algorithms that are making recommendations or controlling the flow of information. Cases like the unintentional racial bias in a machine learning based beauty contest algorithm, meant to remove bias of human judges; a series of embarrassing news recommendations on the Facebook trending topics feed, as a results of an attempt to avoid (appearance of) bias by getting rid of human editors; and controversy about Facebook’s automated editorial decision to remove the Pulitzer prize-winning “napalm girl” photograph because the image was identifies as containing nudity. My view of these events? “Facebook’s algorithms give it more editorial responsibility – not less“ (published today in the Conversation).

Emancipating Users Against Algorithmic Biases for a Trusted Digital Economy

A recent report from the BBC covers one instance of the ever-growing use of algorithms for social purposes and helps us to illustrate some key ethical concerns underpinning the UnBias project.

A recent report from the BBC covers one instance of the ever-growing use of algorithms for social purposes and helps us to illustrate some key ethical concerns underpinning the UnBias project. In an almost suspiciously conspiracy-like fashion the official launch of UnBias at the start of September was immediately accompanied by a series of news articles providing examples of problems with algorithms that are making recommendations or controlling the flow of information. Cases like the unintentional racial bias in a

In an almost suspiciously conspiracy-like fashion the official launch of UnBias at the start of September was immediately accompanied by a series of news articles providing examples of problems with algorithms that are making recommendations or controlling the flow of information. Cases like the unintentional racial bias in a