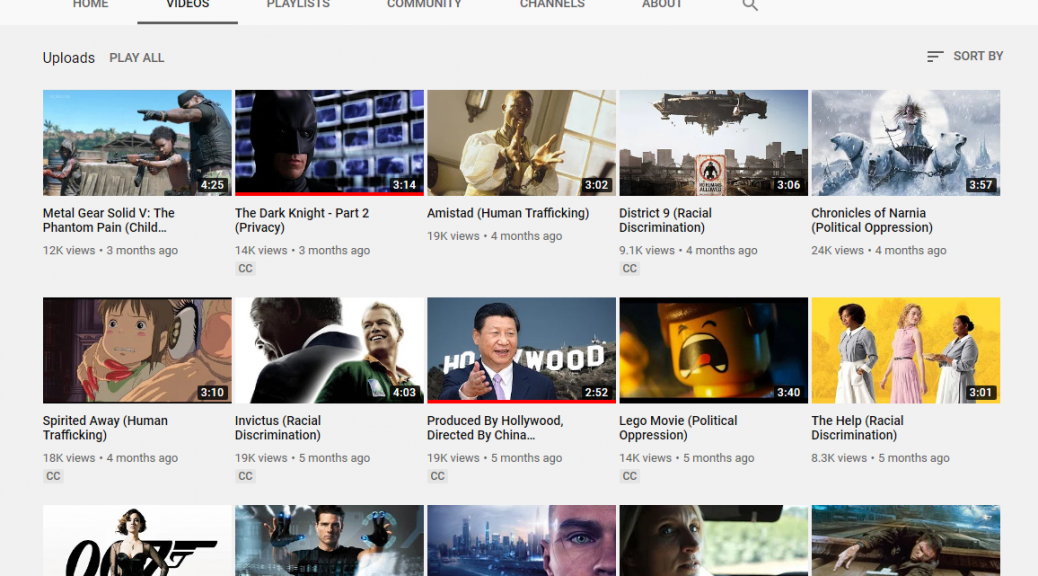

What impact do short videos about human rights have on the awareness and engagement with with Human Rights by University students? This is the question we sought to address in a recent study in collobation with with Prof. Matthew Daniles of the Institute of World Politics, who in 2016 launched the universalrights.com YouTube channel that features student created short human rights videos.

Continue reading Telling Tales of Engagement: Raising Awareness of Human Rights Issues

The hackathon challenge was formulated as follows:

The hackathon challenge was formulated as follows: