On September 7th the Guardian published an article drawing attention to a study from Stanford University which had applied Deep Neural Networks (a form of machine learning AI) to test if they could distinguish peoples’ sexual orientation from facial images. After reading both the original study and the Guardian’s report about it, there were so many problematic aspects about the study that I immediately had to write a response, which was published in the Conversation on September 13th under the title “Machine gaydar: AI is reinforcing stereotypes that liberal societies are trying to get rid of“.

Continue reading In the Conversation: “Machine gaydar: AI is reinforcing stereotypes that liberal societies are trying to get rid of” →

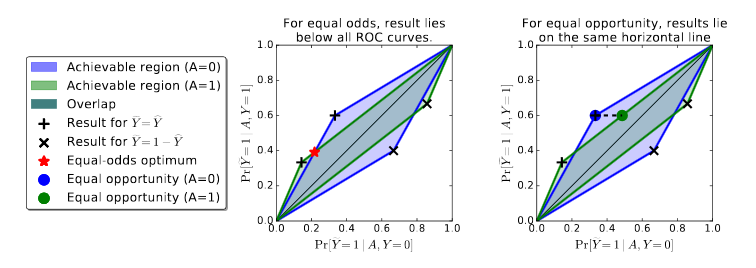

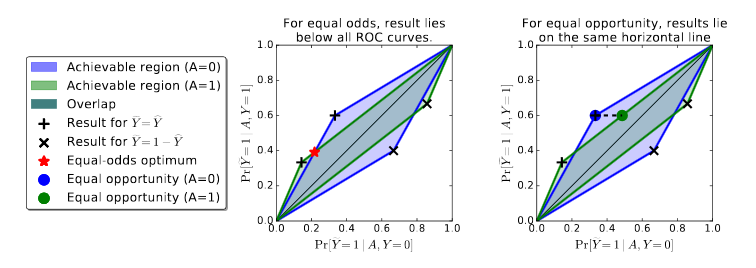

An important topic considered this year at the International Conference on Neural Information Processing Systems (NIPS), one of the prime outlets for machine learning and Artificial Intelligence research in the world, is the connection between machine learning, law and ethics. In particular, a paper presented by Moritz Hardt, Eric Price, and Nathan Srebro focused on Equality of Opportunity in Supervised Learning.

Continue reading Fair machine learning techniques and the problem of transparency →

Emancipating Users Against Algorithmic Biases for a Trusted Digital Economy