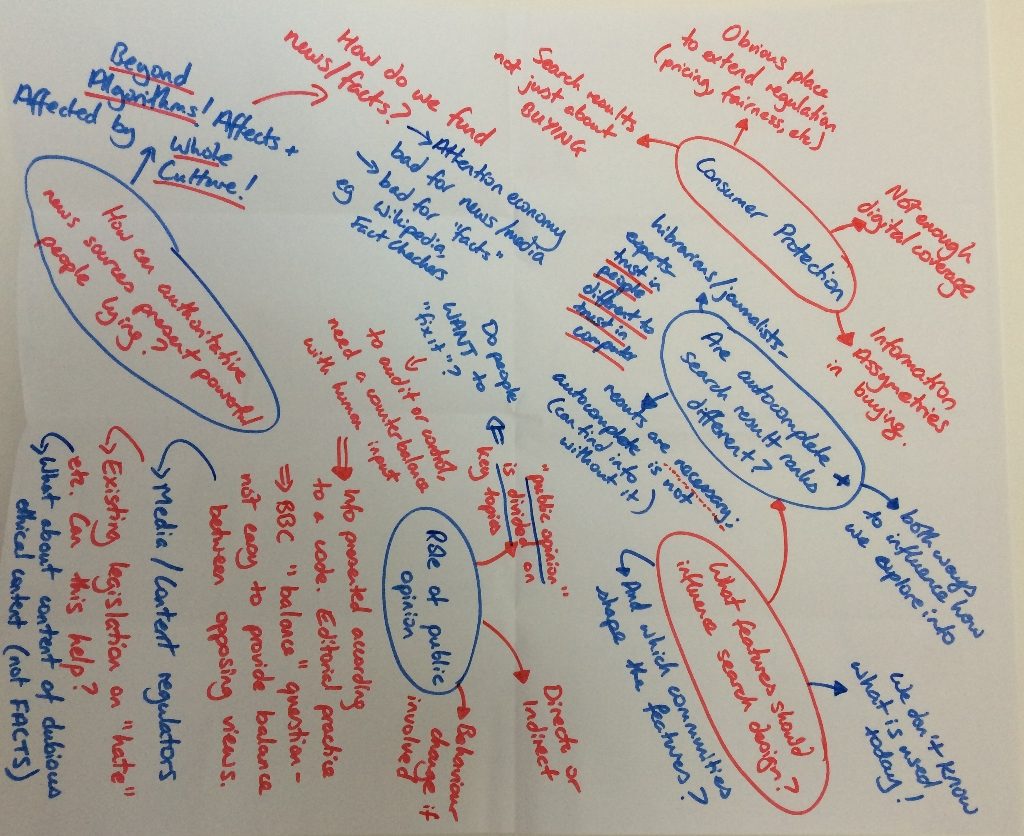

On February 3rd a group of twenty five stakeholders joined us at the Digital Catapult in London for our first discussion workshop.

The User Engagement workpackage of the project focuses on gathering together professionals from industry, academia, education, NGOs and research institutes in order to discuss societal and ethical issues surrounding the design, development and use of algorithms on the internet. We aim to create a space where these stakeholders can come together and discuss their various concerns and perspectives. This includes finding differences of opinion. For example, participants from industry often view algorithms as proprietary and commercially sensitive whereas those from NGOs frequently call for greater transparency in algorithmic design. It is important for us to draw out these kinds of varying perspectives and understand in detail the reasoning that lies behind them. Then, combined with the outcomes of the other project workpackages, we can identify points of resolution and produce outputs that seek to advance responsibility on algorithm driven internet platforms.

Continue reading First UnBias Multi-Stakeholder Workshop →

From Thursday 24th to Monday 28th November, the Nottingham UnBias team contributed to series of workshop/CPD events organized by the

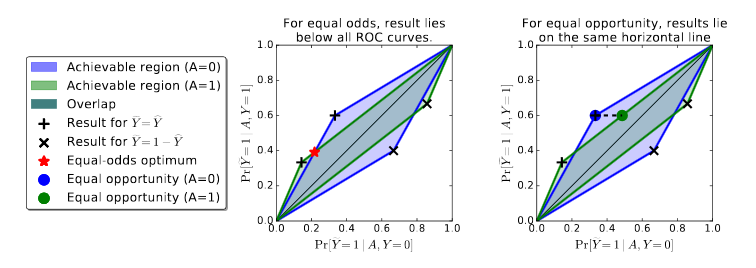

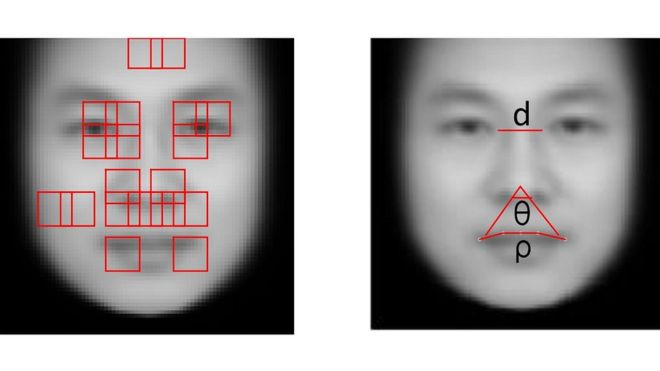

From Thursday 24th to Monday 28th November, the Nottingham UnBias team contributed to series of workshop/CPD events organized by the  A recent report from the BBC covers one instance of the ever-growing use of algorithms for social purposes and helps us to illustrate some key ethical concerns underpinning the UnBias project.

A recent report from the BBC covers one instance of the ever-growing use of algorithms for social purposes and helps us to illustrate some key ethical concerns underpinning the UnBias project. The first youth jury sessions of the UnBias project took place last weekend and were highly interesting and thought provoking. Despite the cold and rainy weather, we had a great turnout with nearly 30 young people choosing to attend. Our youth jurors mostly ranged in age from 13 to 18 and took part in two interactive activities.

The first youth jury sessions of the UnBias project took place last weekend and were highly interesting and thought provoking. Despite the cold and rainy weather, we had a great turnout with nearly 30 young people choosing to attend. Our youth jurors mostly ranged in age from 13 to 18 and took part in two interactive activities.